Unlock the Power of Precision, Recall, & F1 Score: Metrics Explained Simply

In the world of machine learning and data analysis, precision, recall, and F1 score are three crucial metrics that help evaluate the performance of classification models. These metrics are often used in conjunction with each other to provide a comprehensive understanding of a model's strengths and weaknesses. However, despite their importance, many people struggle to understand what each metric means and how to use them effectively. In this article, we will delve into the world of precision, recall, and F1 score, explaining each metric simply and providing examples to illustrate their use.

What is Precision?

Precision is a measure of a model's ability to correctly identify true positive instances. It is defined as the number of true positives (correctly predicted instances) divided by the sum of true positives and false positives (incorrectly predicted instances). In other words, precision measures how accurately a model identifies instances that are actually positive.

Example of Precision

Let's consider a spam filter that identifies 90 emails as spam out of 100 emails it processes. However, upon manual inspection, it is found that 20 of the emails identified as spam are actually legitimate emails. In this case, the precision of the spam filter would be 0.75, which means that 75% of the emails identified as spam are actually spam.

What is Recall?

Recall, on the other hand, is a measure of a model's ability to identify all true positive instances. It is defined as the number of true positives divided by the sum of true positives and false negatives (missed instances). In other words, recall measures how thoroughly a model identifies instances that are actually positive.

Example of Recall

Returning to the spam filter example, let's say that out of 100 emails processed, the filter correctly identifies 80 as spam. However, it misses 10 emails that are actually spam. In this case, the recall of the spam filter would be 0.88, which means that 88% of all spam emails are correctly identified.

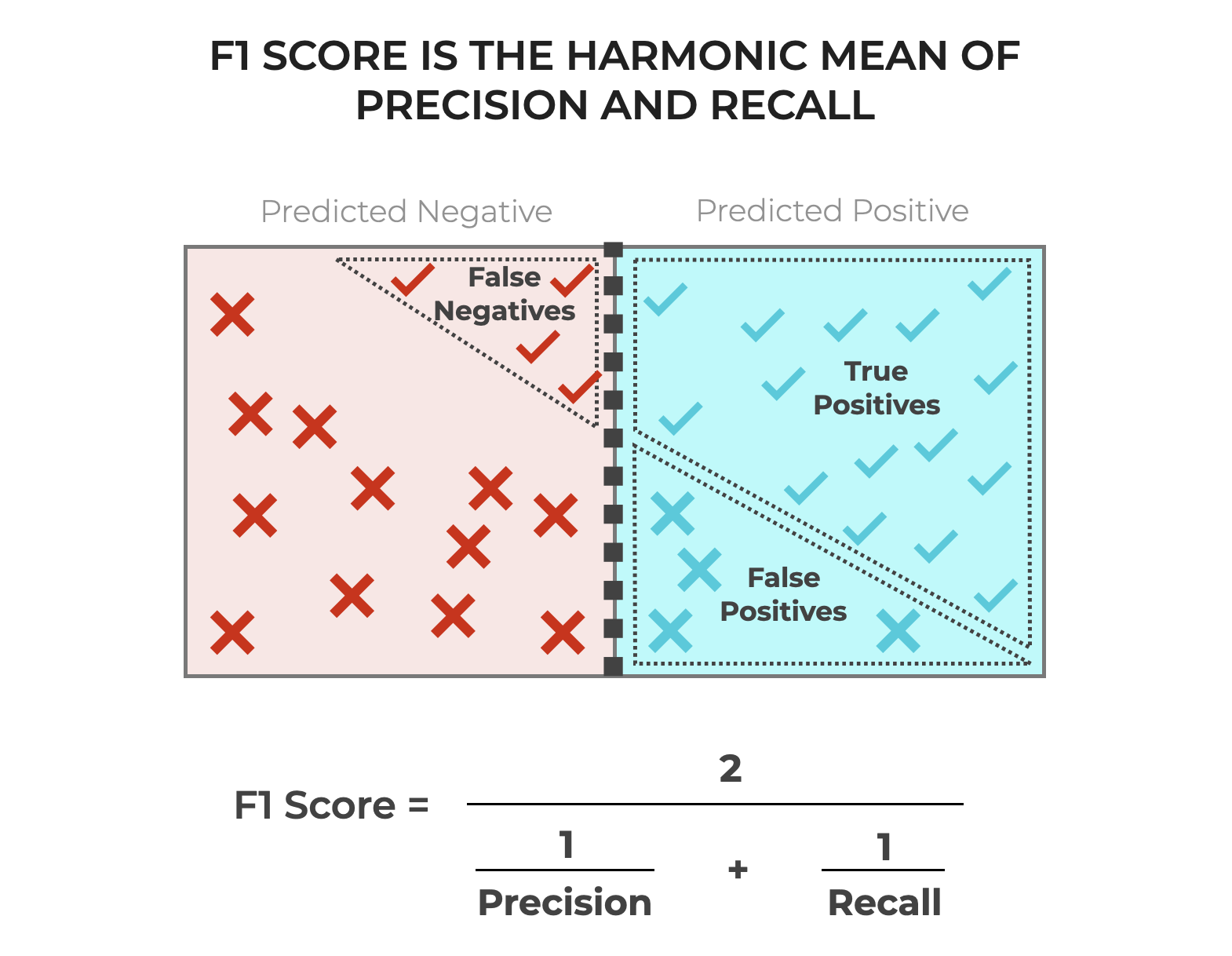

What is F1 Score?

The F1 score is a measure of a model's overall performance, taking into account both precision and recall. It is defined as the harmonic mean of precision and recall. In other words, the F1 score is a weighted average of precision and recall, with more emphasis placed on precision when precision is low and more emphasis placed on recall when recall is low.

Example of F1 Score

Let's consider the spam filter example again, where precision is 0.75 and recall is 0.88. In this case, the F1 score would be 0.81, which indicates a relatively good performance of the spam filter. However, if precision were 0.5 and recall were 0.95, the F1 score would be 0.62, indicating a poorer performance of the spam filter.

Why Use Precision, Recall, & F1 Score?

There are several reasons why precision, recall, and F1 score are used in machine learning and data analysis. Firstly, these metrics provide a more comprehensive understanding of a model's performance than a single metric such as accuracy. Secondly, they allow for the evaluation of a model's performance on different aspects, such as precision and recall, which may be more important depending on the application. Lastly, these metrics can be used to compare the performance of different models and make informed decisions about model selection.

Common Misconceptions About Precision, Recall, & F1 Score

Despite their importance, precision, recall, and F1 score are often misunderstood. Here are a few common misconceptions:

- Precision and recall are mutually exclusive. In reality, they are complementary metrics that provide a more complete picture of a model's performance.

- The F1 score is a substitute for accuracy. While the F1 score is a more comprehensive metric, accuracy is still a useful metric to evaluate a model's performance, especially when there is an imbalance in the classes.

- Precision, recall, and F1 score are only applicable to classification problems. While these metrics are commonly used in classification problems, they can also be applied to regression problems, such as ranking or recommender systems.

Best Practices for Using Precision, Recall, & F1 Score

Here are some best practices for using precision, recall, and F1 score:

- Use precision, recall, and F1 score in conjunction with other metrics, such as accuracy and AUC-ROC.

- Evaluate a model's performance on a representative dataset, taking into account class imbalances and outliers.

- Use precision, recall, and F1 score to compare the performance of different models and make informed decisions about model selection.

Conclusion

Precision, recall, and F1 score are essential metrics in machine learning and data analysis. By understanding what each metric means and how to use them effectively, data scientists and analysts can evaluate a model's performance, identify areas for improvement, and make informed decisions about model selection. Remember, precision, recall, and F1 score are not mutually exclusive metrics, but rather complementary metrics that provide a more complete picture of a model's performance.